Your Bridge to Ultra-Low-Power AI

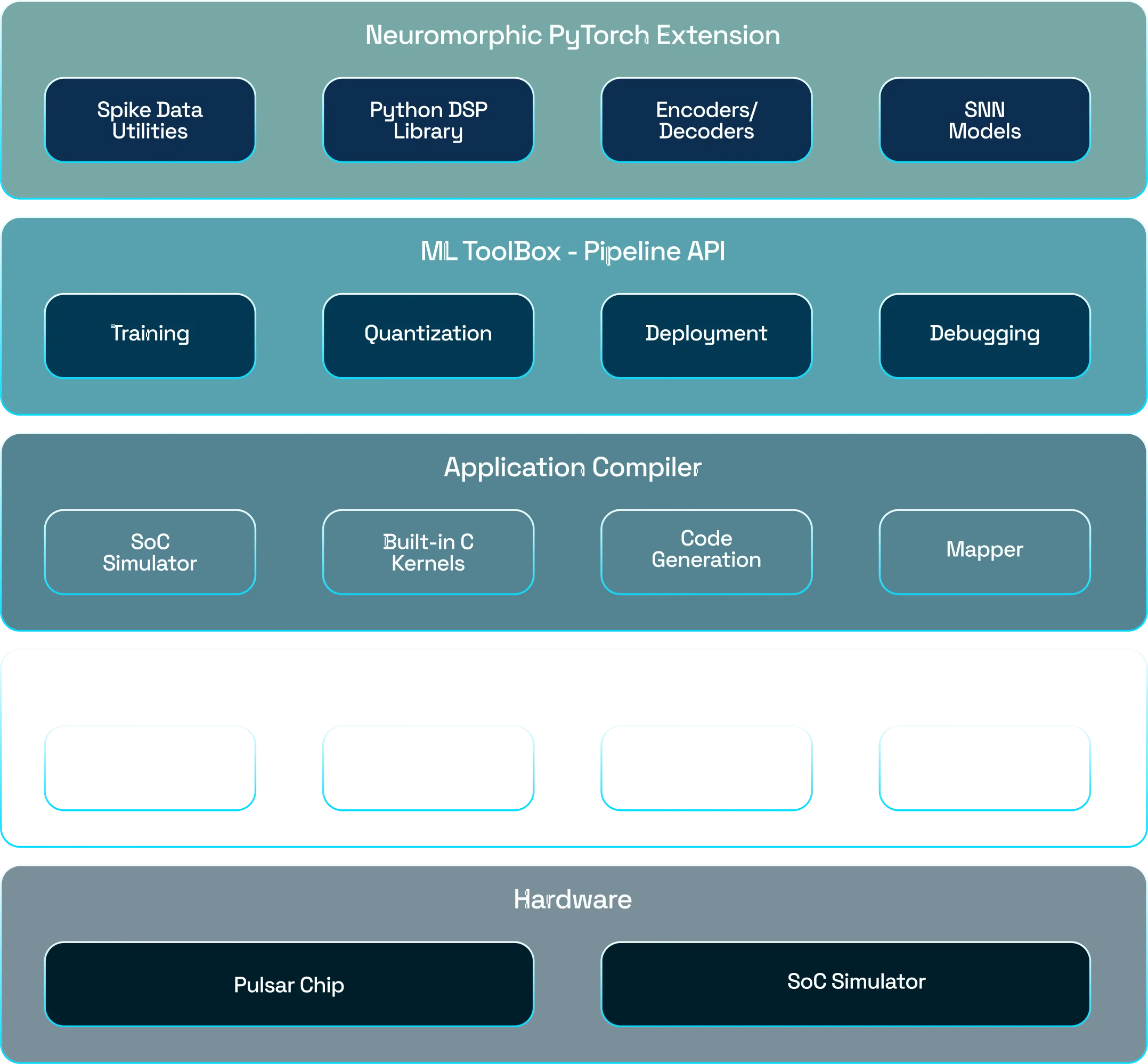

Talamo is a complete Software Development Kit (SDK) for Edge AI development. It’s designed to seamlessly bridge familiar machine learning frameworks to the powerful ultra-low-power world of Spiking Neural Networks (SNNs).

Low-Code Solution

Spend less time writing boilerplate code and more time innovating. Our high-level API simplifies the entire workflow.

No Prior SNN Knowledge Required

You don't need to be an SNN expert. If you can build a model in PyTorch or Tensorflow, you can create a model for our hardware.

Easy Deployment & Compilation

Talamo handles the complex conversion and compilation, turning your existing models into efficient hardware-ready applications.

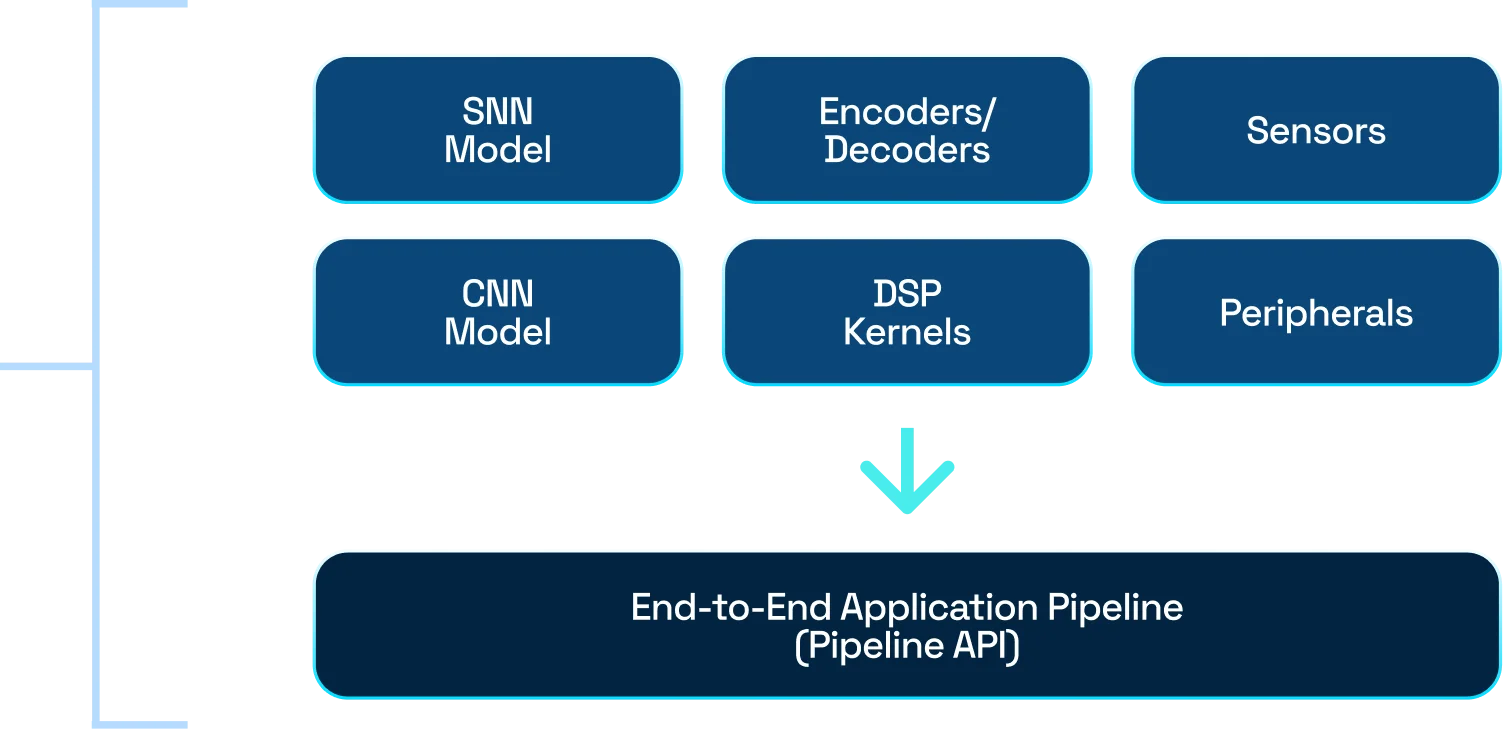

From Model to Deployment, Simplified

-

PyTorch Extension for Spiking Neural Networks (SNNs)

-

Spike Encoders & Decoders to transform numerical data into spikes

-

Modular application pipeline design

Convert End-to-End application into C Source Code

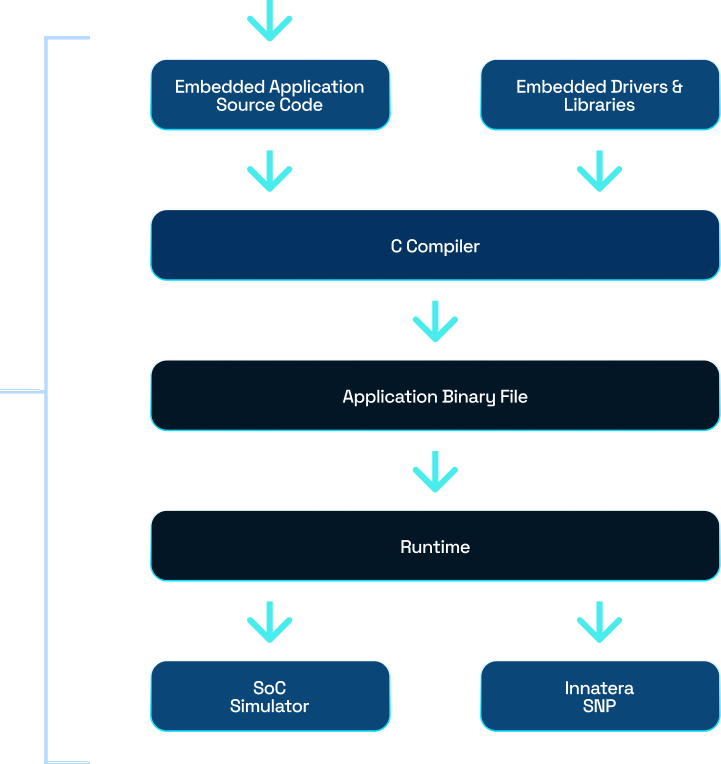

Standard MCU

Programming Workflow

Build your model journey with Talamo

Pulsar brings real-time, event-driven intelligence directly to your devices, enabling sub-millisecond responsiveness at microwatt power levels.

- from talamo.encoders.c1 import IFEncoder

- from talamo.decoders import MaxRateDecoder

- from talamo.snn.containers import Snn

- from talamo.pipeline.elements import Pipeline, MFCC

- num_features = 32

- snn_model = MySNNModel()

- # End-to-End SNN pipeline including preprocessing

- pipe = Pipeline(

- steps=[

-

MFCC(n_mfcc=num_features, n_fft=512, hop_length=512, n_mels=128, sample_rate=22050),

# Mel-frequency cepstral coefficients preprocessing -

IFEncoder(num_encoder_channels=num_features),

# Integrate-and-fire encoder - Snn(snn_model),

- MaxRateDecoder(),

- ]

- )

- import torch

- lr = 0.2

- batch_size = 128

- # Parameters for different pipeline steps can be trained

- optimizer = torch.optim.Adam([

- {‘params’: snn_params, “lr”: lr},

- {‘params’: encoder_params, “lr”: lr*2}], lr=lr)

- loss_fn = torch.nn.NLLLoss()

- # Built in training function or implement custom training loop

- pipe.fit(

- dataset=train_dataset,

- epochs=50,

- dataloader_type=torch.utils.data.DataLoader,

- dataloader_args={“batch_size”: batch_size, “shuffle”: True},

- optimizer=optimizer,

- loss_function=loss_fn,

- verbose=2,

- )

-

from talamo.quantization

import RoundAndClamp - # Quantize using talamo quantizer

- weight_quantizer = RoundAndClamp(all_params=pipe.query_torch_params(“*.weight”), lower_limit=-128, upper_limit=127)

- pipe.quantize(quantizers=[weight_quantizer])

-

from talamo.device.c1

import Soc - innatera_soc = Soc()

-

pipe.to(innatera_soc)

# Deploy pipeline to Innatera Soc - pipe.evaluate(dataset=test_dataset)

From Model to Deployment, Simplified

Contact us

Based on your request type, please use the relevant form below to contact us. Please use the ‘Sales’ form if you are interested in a commercial engagement with Innatera.